Competitive Research & Product Strategy for AI-Powered Analytics Experiences

ROLE: DESIGN RESEARCH LEAD

CLIENT: MINUTE MEDIA

INDUSTRY: DIGITAL MEDIA & VIDEO TECHNOLOGY

YEAR: 2025

MEDIUM: UX Research, Competitive Analysis, Product Strategy

SCOPE: Competitive Analysis, UX Research, UX Pattern Analysis, Product Strategy

As AI rapidly transformed analytics and reporting tools, Minute Media explored how conversational AI and intelligent assistance could improve complex, data-heavy workflows.

I led a research initiative focused on identifying emerging AI UX patterns, competitive trends, and strategic opportunities across analytics platforms. Alongside competitive analysis, I also developed Userlytics-based research to better understand user trust, sentiment, and comfort levels around AI-generated insights and reporting workflows.

The Challenge

Traditional analytics platforms often overwhelm users with complexity and technical workflows. AI introduced both new opportunities and uncertainty.

The goal was to uncover opportunities for AI-assisted workflows while ensuring experiences remained intuitive, transparent, and trustworthy.

The challenge was understanding:

Which AI interactions genuinely improved usability

What patterns users already understood and trusted

How leading competitors were implementing AI-assisted workflows

Where conversational UX could reduce friction in analytics tasks

How users emotionally responded to AI-generated reporting and insights

What level of transparency users needed before trusting AI outputs

Research Objectives

The initiative focused on answering several key questions:

Competitive & Product Research

How are modern analytics platforms integrating AI into reporting workflows?

Which AI interaction patterns appear most effective and user-friendly?

What expectations are users developing around AI-assisted analytics?

Which UX patterns are becoming industry standards?

How might AI reduce friction for non-technical users?

User Sentiment & Trust Research

Do users trust AI-generated insights and summaries?

Which analytics tasks are users comfortable automating?

What concerns do users have around AI accuracy and reliability?

How comfortable are users presenting AI-generated reporting content?

What would increase user confidence in AI-assisted analytics workflows?

Competitive Analysis

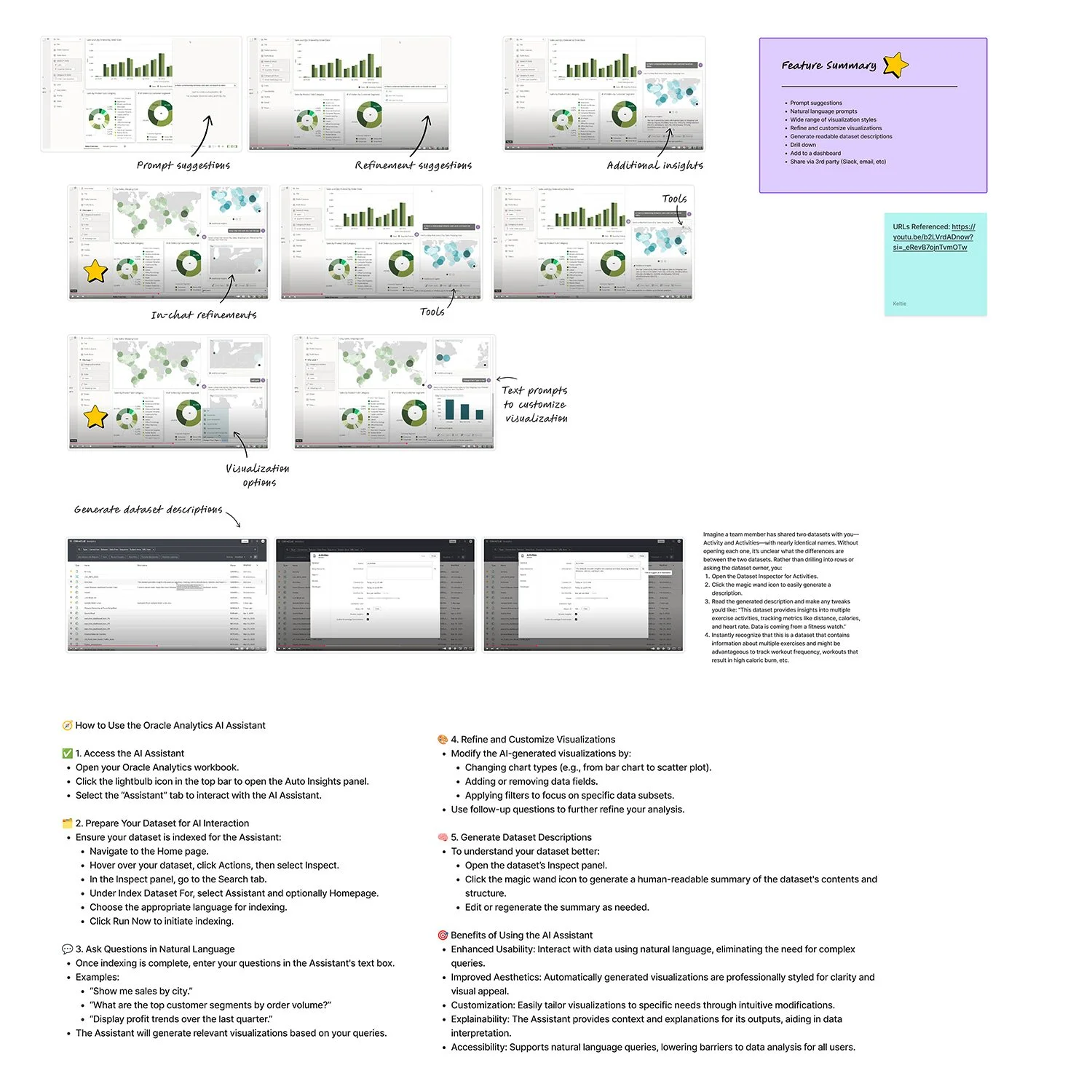

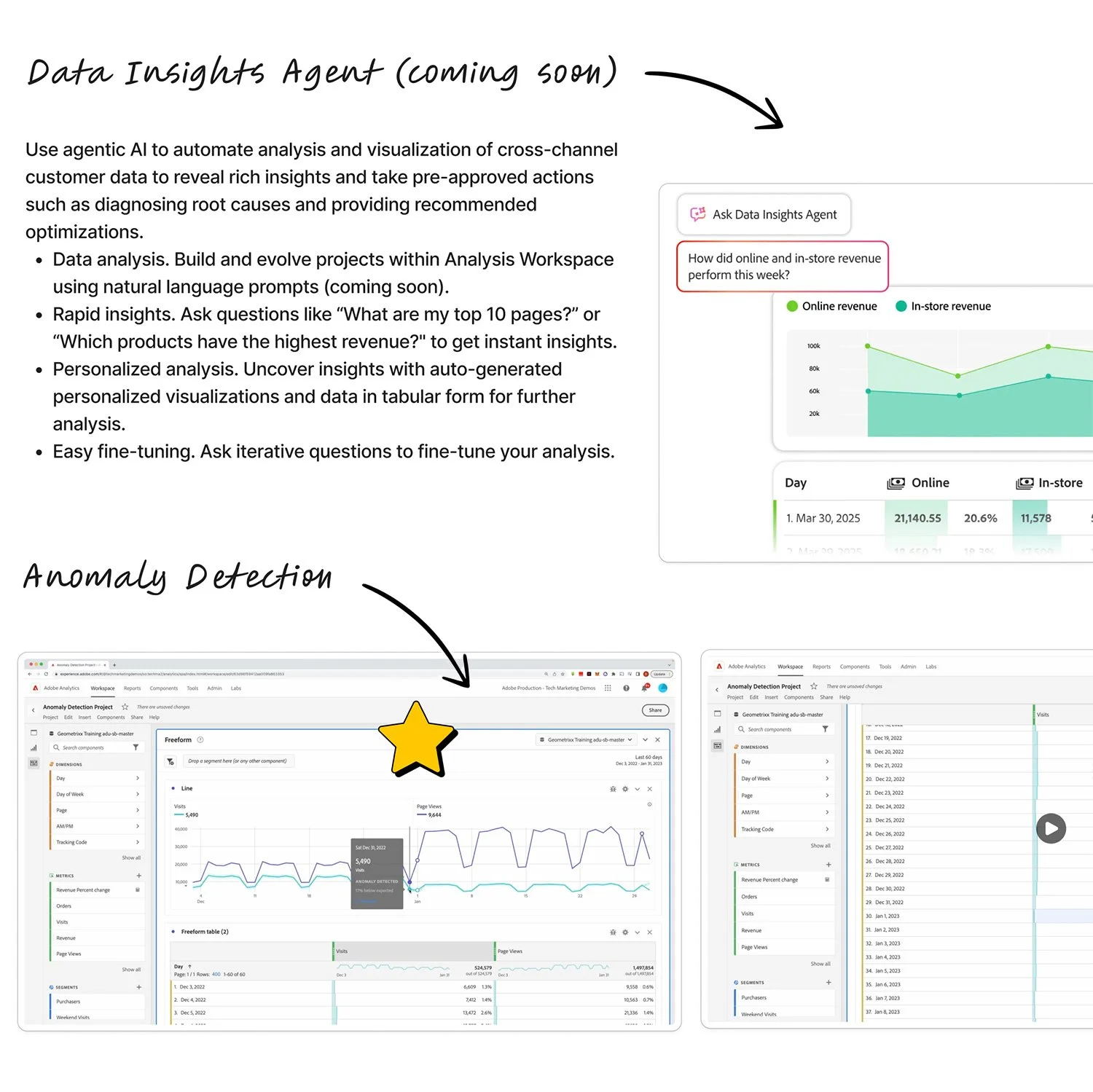

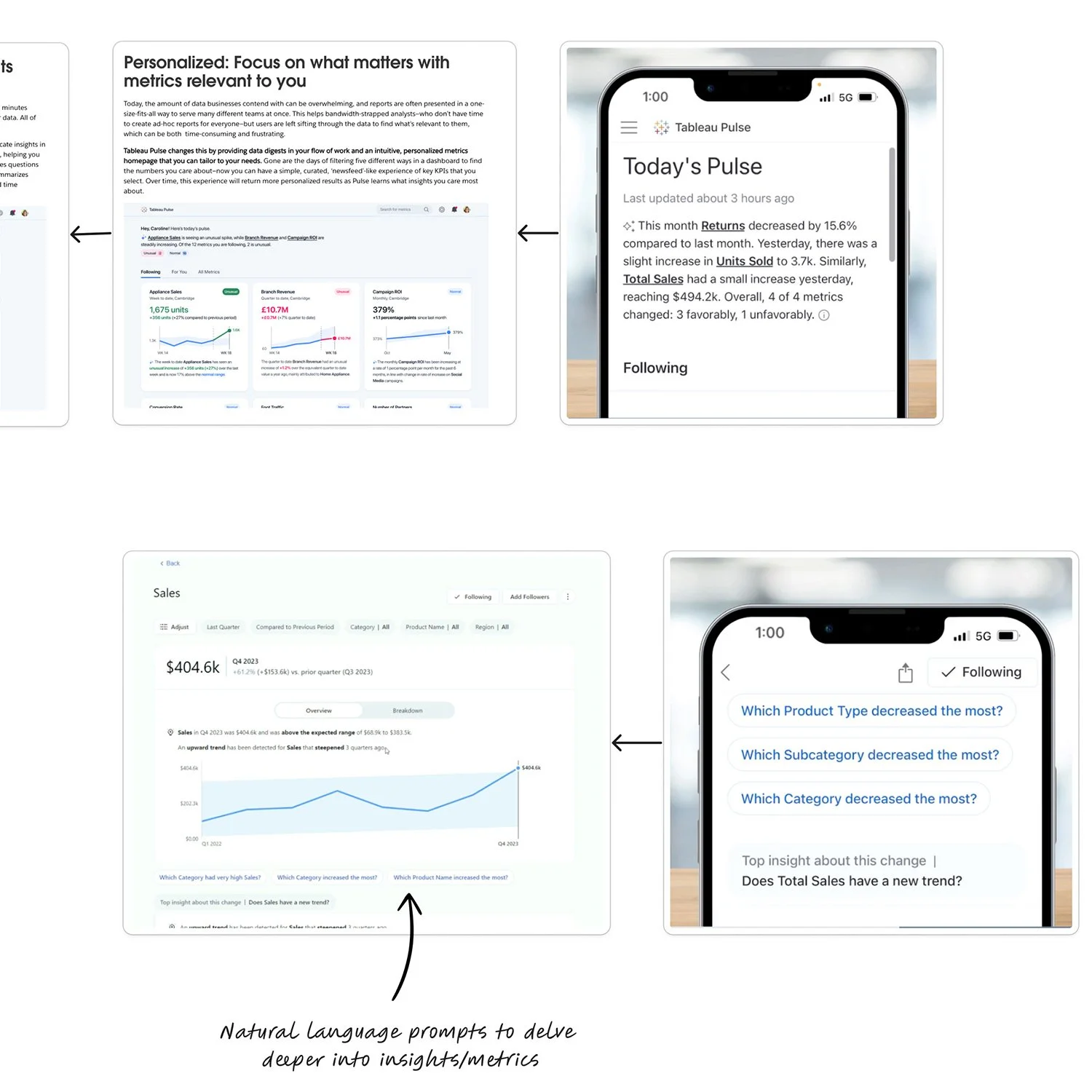

I conducted a large-scale competitive analysis across analytics, BI, advertising, and media platforms to identify recurring AI UX patterns and emerging interaction models.

The research focused on:

Conversational AI interfaces

AI-generated summaries and insights

Prompt-driven workflows

Predictive analytics

AI-assisted visualization

Reporting automation

Guidance and onboarding systems

Explainability and trust-building patterns

AI-generated narratives and storytelling

Platforms Reviewed

Microsoft Power Bi

IBM Cognos

Meta Ads Manager

Google Looker

LinkedIn Campaign Manager

Google Ads

YouTube Studio

Hubspot

Oracle Analytics

Klaviyo

Bright

Wistia

JW Player

Vimeo

UX & Product Insights

Natural Language Became the Primary Interaction Model

Users increasingly interact with analytics tools through conversational prompts rather than manual filtering and report building.

AI Shifted Analytics Toward Storytelling

Getting started is simple. Reach out through our contact form or schedule a call—we’ll walk you through the next steps and answer any questions along the way.

Guidance Reduced Cognitive Load

Prompt suggestions, recommended filters, and contextual assistance helped users navigate complex systems with less friction.

Collaboration Became Embedded

Trust & Transparency Were Critical

The strongest implementations explained how conclusions were generated, helping users validate and trust AI outputs.

Users Wanted Assistance — Not Full Automation

AI-generated summaries and shareable insights were increasingly integrated into Slack, email, and presentation workflows.

User research revealed that participants were generally open to AI-assisted analytics, but still wanted visibility, editability, and control before relying on AI-generated outputs in professional settings.

Validating AI Trust & Adoption

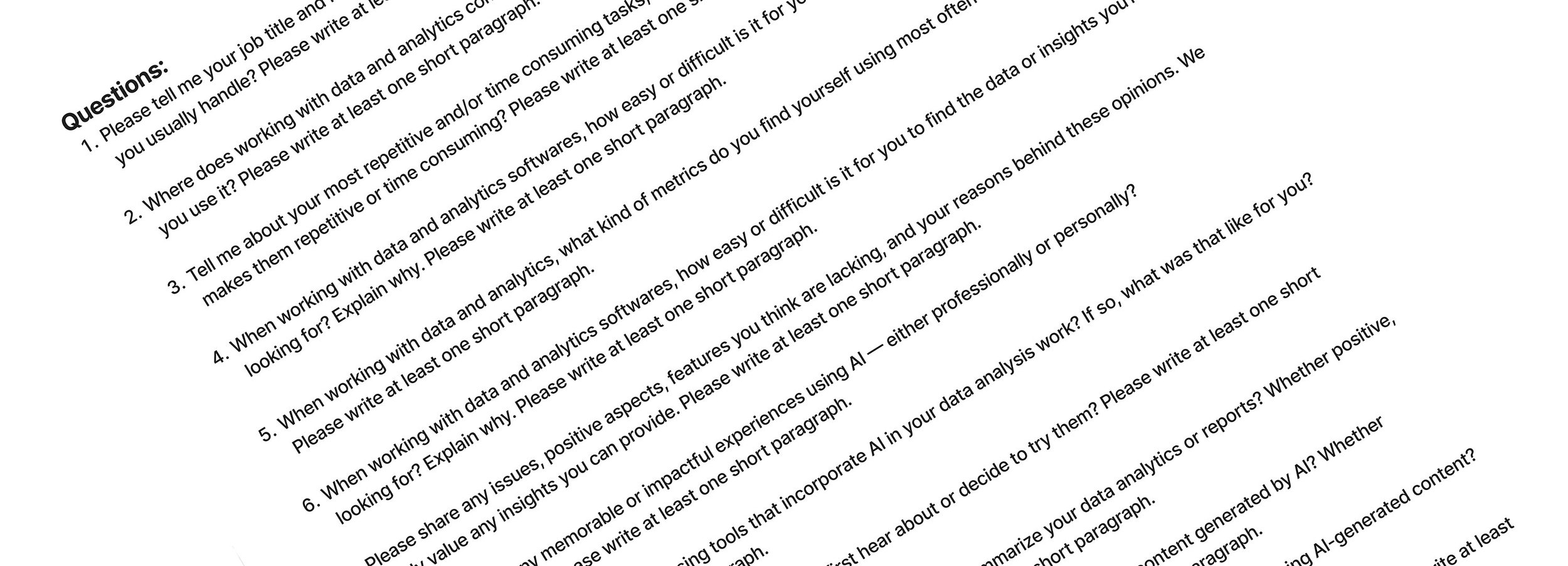

I developed a Userlytics-based unmoderated survey to better understand how users perceived AI within analytics workflows. 27 users who fit the research criteria completed the survey on video.

The research helped identify both excitement and hesitation around AI-assisted reporting, reinforcing the importance of explainability, user oversight, and human-centered interaction design.

Deliverables:

User survey questionnaires

Unmoderated testing scripts

Userlytics testing strategy documentation

Using surveys and moderated research prompts, the study explored:

Whether users trusted AI-generated insights

Which workflows users wanted automated

How users interpreted AI-generated summaries

Where AI interactions created confusion or clarity

What level of transparency users needed to feel confident using AI outputs

How comfortable users felt presenting AI-generated reporting content

What would increase confidence in delegating analytics work to AI

Strategic Reccomendations

Based on the research, several opportunities emerged:

Introduce conversational querying

Use AI-generated summaries to surface insights faster

Provide onboarding guidance and prompt suggestions

Embed explainability into AI outputs

Prioritize transparency and user control

Support collaboration and reporting workflows

Outcome & Impact

The research established a foundational understanding of how AI was reshaping analytics experiences and identified emerging UX standards across enterprise platforms.Introduce conversational querying

The work helped clarify:

Which AI patterns users were becoming familiar with

Which trust factors influenced adoption

Where AI could reduce workflow friction

How future AI initiatives could be validated through user research

Reflection

This project strengthened my ability to connect UX research, product strategy, emerging technology trends, and user sentiment analysis into actionable product direction.

One of the biggest takeaways was recognizing that successful AI experiences are not defined by novelty alone — they succeed when they reduce complexity, build trust, and help users make decisions more confidently.

Conducting Userlytics-based trust and sentiment research also reinforced how important transparency, explainability, and user control are when designing AI-assisted workflows for professional environments.

The project ultimately highlighted that understanding emotional trust in AI is just as important as understanding technical capability.